Coalescing Operations to Avoid Duplicated Work

When our apps are small, they often have a guaranteed startup sequence - one we can tightly control to avoid waste. As an app grows, that guarantee disappears. The sequence fragments as features become more independent. As complexity increases, so does the opportunity for waste. Each feature becomes responsible for setting itself up, unaware of what the others are doing or have already done, leading to duplicate work.

Duplicated work is wasted work. And as features multiply, so do the chances we'll undertake duplicated work.

This post will explore how we can determine if work is already underway and how to coalesce that work using Operation and OperationQueue.

This post will gradually build up to a working example. But if you can't wait that long, then head on over to the completed example and take a look to see how things end up.

Looking at What We Need to Build

To coalesce operations is to treat identical requests as one piece of work - even if they arrive from different parts of the app.

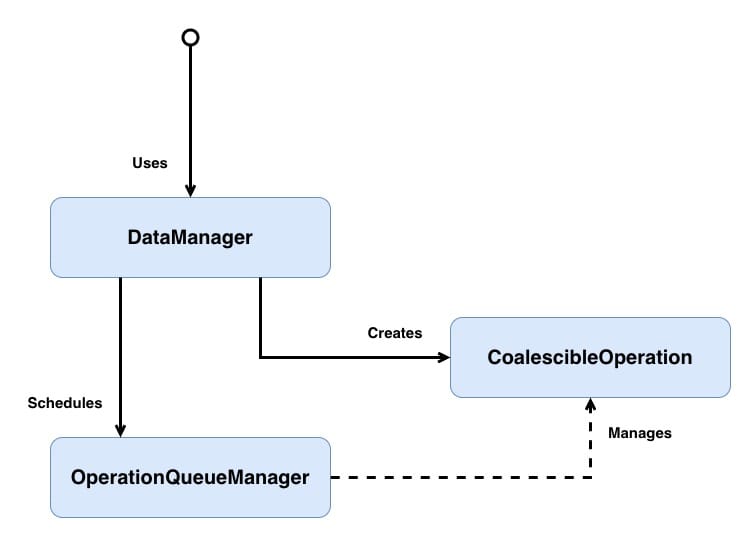

Before jumping into building each component, let's take a moment to look at the overall architecture we're going to put in place and how each component fits into it.

OperationQueueManager- responsible for queuing or triggering the coalescing of operations and determining if an operation already exists on the queue. Ensures that only unique operations are added to the queue(s).DataManager- responsible for abstracting away the operation creation and passing that operation to theOperationQueueManager.CoalescibleOperation- an operation that can be coalesced with other matching operations. Coalescing happens by nesting their completion closures.

I've used generic naming in the class diagram, but in the example, we will be working in concrete types, so

DataManagerwill becomeUserDataManager, and we will create a subclass ofCoalescibleOperationnamedUserFetchOperation.

Don't worry if that doesn't all make sense yet; we will look into each component in greater depth below.

Let's take a small recap of what OperationQueue and Operation are.

OperationQueue is responsible for coordinating the execution of operations. Rather than executing work immediately, it schedules operations based on each operation's readiness, priority, dependencies, and available system resources.

Because OperationQueue maintains visibility over its operations, we can inspect, pause, or cancel them - for example, cancelling in-flight requests when a user logs out.

Under the hood, OperationQueue leverages GCD, allowing it to take advantage of multiple cores without the developer needing to manage threads directly. By default, it will execute as many operations in parallel as the device can reasonably support.

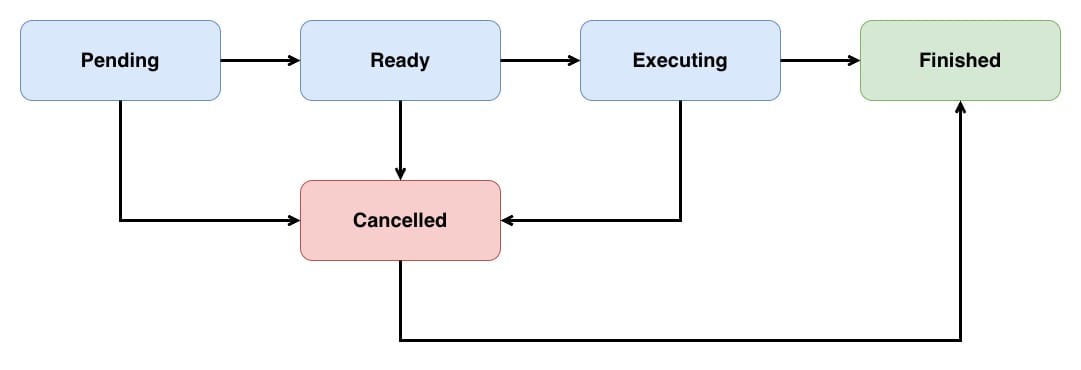

Operation is an abstract class which needs to be subclassed to undertake a specific task. An Operation typically runs on a separate thread from the one that created it. Each operation is controlled via an internal state machine; the possible states are:

Pendingindicates that the operation has been added to the queue.Readyindicates that the operation is good to go, and if there is space on the queue, this operation's task can be started.Executingindicates that the operation is actually doing work at the moment.Finishedindicates that the operation has completed its task and should be removed from the queue.Cancelledindicates that the operation has been cancelled and should stop its execution.

A typical operation's lifecycle will move through the following states:

It's important to note that cancelling an executing operation will not automatically stop that operation; instead, it is up to the individual operation to clean up after itself and transition into the Finished state.

Operations come in two flavours:

- Non-Concurrent

- Concurrent

Non-Concurrent operations perform all their work on the same thread, so that when the main method returns, the operation is moved into the Finished state. The queue is then notified of this and removes the operation from its active operation pool, freeing resources for the next operation.

Concurrent operations can perform some of their work on a different thread, so returning from the main method can no longer be used to move the operation into a Finished state. Instead, when we create a concurrent operation, we assume the responsibility for moving the operation between the Ready, Executing, and Finished states.

Our CoalescibleOperation will be concurrent so it can support both concurrent and non-concurrent work without changing the abstraction.

Building the Operation

Let's start by implementing the CoalescibleOperation parent class:

// 1

class CoalescibleOperation<Value>: Operation {

// 2

let identifier: String

// 3

private(set) var completionHandler: (_ result: Result<Value, Error>) -> Void

// 4

let callBackQueue: OperationQueue

// MARK: - Init

// 5

init(identifier: String,

callBackQueue: OperationQueue = OperationQueue.current ?? .main,

completionHandler: @escaping (_ result: Result<Value, Error>) -> Void) {

self.identifier = identifier

self.callBackQueue = callBackQueue

self.completionHandler = completionHandler

super.init()

}

}CoalescibleOperationis a generic subclass ofOperation. The type parameterValuerepresents the success type of the operation'sResult- so a network operation returning decoded JSON might beCoalescibleOperation<User>, while one returning an image might beCoalescibleOperation<Image>. This lets subclasses define what a successful result looks like while the coalescing machinery remains type-safe.identifieris a string identifier used to match operations that should be coalesced together.completionHandleris the completion handler that will be called when the operation finishes. It'sprivate(set)because coalescing replaces it with a combined closure that calls both the original and the new handler.callBackQueueis the queue that the completion handler will be dispatched onto, ensuring callbacks happen on the expected queue - typically the queue that created the operation. Not to be confused with theOperationQueueManager.- The initialiser captures the caller's completion handler and callback queue. It defaults

callBackQueueto whichever queue the operation was created on, falling back to themainqueue.

As mentioned, a concurrent operation takes responsibility for ensuring that its internal state is correct. This state is controlled by manipulating the isReady, isExecuting and isFinished properties. However, we can't just set these values directly as they are read-only. Instead, we will need to override these properties and track operations' current state via a different property. When mapping state, enums work best:

class CoalescibleOperation<Value>: Operation {

// Omitted other functionality

// 1

private enum State {

case ready

case executing

case finished

}

// 2

private var state = State.ready

// 3

override var isReady: Bool {

return super.isReady && state == .ready

}

// 4

override var isExecuting: Bool {

return state == .executing

}

// 5

override var isFinished: Bool {

return state == .finished

}

}- A private enum representing the three operation states that we will control to make

CoalescibleOperationinto a concurrent operation. - The operation starts in the

readystate. - Maps

isReadyto use both ourstatevalue and the superclassisReadyvalue -super.isReadyis managed internally byOperationand tracks things like whether dependencies have finished. By combining them, the operation is only considered ready when both the internal conditions ofisReadyfromOperationare satisfied, and ourCoalescibleOperationis ready. - Maps

isExecutingto our internal state. - Maps

isFinishedto our internal state. When this returnstrue, theOperationQueueknows to remove the operation from the queue.

OperationQueue uses KVO to know when its operations change state so that it can control the flow of operations. Let's add in KVO support:

class CoalescibleOperation<Value>: Operation {

// Omitted other functionality

// 1

enum State: String {

case ready = "isReady"

case executing = "isExecuting"

case finished = "isFinished"

}

var state = State.ready {

// 2

willSet {

willChangeValue(forKey: newValue.rawValue)

willChangeValue(forKey: state.rawValue)

}

// 3

didSet {

didChangeValue(forKey: oldValue.rawValue)

didChangeValue(forKey: state.rawValue)

}

}

}- Each enum case's raw value matches the corresponding

Operationproperty name. This lets us use the raw value directly as theKVOkey when notifying observers of state changes. - Before the state changes, we notify

KVOobservers that both the new state's property and the current state's property are about to change. For example, transitioning fromreadytoexecutingtells observers that bothisExecutingandisReadyare about to change. - After the state changes, we notify

KVOobservers that the transition is complete.OperationQueuerelies on these KVO notifications to know when an operation has started, finished, or is ready to execute - without them, the queue won't respond to state changes.

With KVO support implemented, let's add in the lifecycle methods to move through the various states:

// 1

enum CoalescibleOperationError: Error, Equatable {

case cancelled

}

class CoalescibleOperation<Value>: Operation {

// Omitted other functionality

// 2

override func start() {

// 3

guard !isCancelled else {

finish(result: .failure(CoalescibleOperationError.cancelled))

return

}

// 4

state = .executing

// 5

main()

}

// 6

func finish(result: Result<Value, Error>) {

guard !isFinished else {

return

}

state = .finished

// 7

callBackQueue.addOperation {

self.completionHandler(result)

}

}

// 8

override func cancel() {

super.cancel()

finish(result: .failure(CoalescibleOperationError.cancelled))

}

}- A custom error type for cancellation.

- We override

startrather thanmainbecause this is a concurrent operation - we're taking responsibility for managing state transitions ourselves. It's important to note thatsuper.start()isn't being called intentionally, as by overridingstart, this operation assumes full control of maintaining its state. - If the operation was cancelled before it started, we deliver a cancellation error to all completion handlers and transition to

finished. This ensures coalesced callers aren't left waiting indefinitely. - Transitions the operation into the

executingstate, triggering KVO notifications that tell the queue the operation is now active. - Calls

mainwhere subclasses perform their actual work. Subclasses callfinish(result:)when their work is done.mainis actually the entry point for non-concurrent operations, by choosingmainto be the entry point for our concurrent operation, the cognitive load on any future developer is reduced, as it allows them to transfer the expectation of how non-concurrent operations work to our concurrent operation implementation. finish(result:)is the single exit point for delivering a result. Theguard !isFinishedprevents double-finishing, which could happen ifcanceland the operation's work complete at roughly the same time. It's essential that all operations eventually call this method. If you are experiencing odd behaviour where your queue seems to have jammed, and no operations are being processed, one of your operations is probably missing a finish call somewhere.- The completion handler is dispatched onto

callBackQueueso the caller receives the result on the queue they expect. cancelcallssuper.cancel()to set theisCancelledflag, then immediately delivers a cancellation error viafinish(result:). This ensures the operation always reaches thefinishedstate as Apple's documentation requires -cancelledalone is not a valid end state.

In older versions of iOS, concurrent operations were responsible for calling main on a different thread - this is no longer the case.

All that's left to do state-wise is to indicate that this is an asynchronous operation by overriding isAsynchronous:

class CoalescibleOperation<Value>: Operation {

// Omitted other functionality

override var isAsynchronous: Bool {

return true

}

}As CoalescibleOperation subclasses are always expected to be executed via an OperationQueue, overriding isAsynchronous is strictly not needed, but we override it here to express intent clearly. isAsynchronous only matters if we call start directly on the operation without a queue. In that case, the caller is supposed to check isAsynchronous to decide whether to spin up a separate thread.

We've made CoalescibleOperation support concurrent execution, but it doesn't yet coalesce; let's change that:

class CoalescibleOperation<Value>: Operation {

// Omitted other functionality

// 1

func coalesce(operation: CoalescibleOperation<Value>) -> Bool {

// 2

guard !isFinished else {

return false

}

// 3

let initialCompletionHandler = self.completionHandler

// 4

let additionalCompletionHandler = operation.completionHandler

let additionalCallBackQueue = operation.callBackQueue

// 5

self.completionHandler = { result in

initialCompletionHandler(result)

additionalCallBackQueue.addOperation {

additionalCompletionHandler(result)

}

}

return true

}

}coalesce(operation:)takes anotherCoalescibleOperationwith the sameValuetype. Subclasses are accepted through normal polymorphism.coalesce(operation:)returns a Bool to indicate whether the attempt at coalescing was a success or not.- Check to ensure this operation isn't already finished before coalescing.

- Captures the current completion handler before it is replaced during coalescing. This is important because

completionHandleris about to be overwritten, and we need to preserve the existing closure chain. - Captures the to-be-coalesced operation's completion handler and callback queue. The callback queue is captured separately because the new operation may expect to be called back on a different queue than the current operation expects.

- Replaces the current operation's

completionHandlerwith a new closure that calls both the current operation'scompletionHandlerand the to-be-coalesced operation'scompletionHandler. As more operations are coalesced, this closure chain ofcompletionHandlerinsidecompletionHandlergets deeper and deeper. Note thatadditionalCompletionHandleris triggered onto its own callback queue rather than the existing operation's queue - coalescing shares the work, not the communication channel.

We've added the ability to coalesce, but CoalescibleOperation as it stands at the moment has multiple race conditions that could crash the thread this operation is running on if the mutable state is updated from different threads. Let's protect against that by using a NSRecursiveLock to ensure only one thread can mutate state at any given moment:

class CoalescibleOperation<Value>: Operation {

// Omitted unchanged functionality

// 1

private let lock = NSRecursiveLock()

// 2

private var _state = State.ready {

willSet {

willChangeValue(forKey: newValue.rawValue)

willChangeValue(forKey: _state.rawValue)

}

didSet {

didChangeValue(forKey: oldValue.rawValue)

didChangeValue(forKey: _state.rawValue)

}

}

// 3

private var state: State {

get {

lock.lock()

defer { lock.unlock() }

return _state

}

set {

lock.lock()

defer { lock.unlock() }

_state = newValue

}

}

// Omitted unchanged functionality

// 4

func finish(result: Result<Value, Error>) {

lock.lock()

defer { lock.unlock() }

// Omitted unchanged functionality

}

// 5

func coalesce(operation: CoalescibleOperation<Value>) -> Bool {

lock.lock()

defer { lock.unlock() }

// Omitted unchanged functionality

}

}- The recursive lock that will be used to synchronise access to shared mutable state -

stateandcompletionHandler. It's recursive becauseKVOcreates re-entrant calls: when the setter updates_state,KVOnotifications fire synchronously, which causesOperationQueueto readisFinished/isExecuting/isReady, which calls the getter, which needs to acquire the same lock on the same thread. A non-recursive lock, or dispatch queue, would deadlock here. - Added new backing storage for the operation's state to separate the storage from the threading protection. The

KVOnotifications inwillSetanddidSetremain on the backing property so they fire within the lock - ensuring observers always see a consistent state. - The thread-safe accessor for state. Both the getter and setter acquire the lock before accessing

_state. Thedeferensures the lock is always released. finish(result:)acquires the lock to ensure that readingisFinishedand updatingstateandcompletionHandlerhappens atomically. Without this, a concurrentcoalesce(operation:)call could modifycompletionHandlerbetween the finished check and the handler being read.coalesce(operation:)acquires the lock to ensure it doesn't modifycompletionHandlerwhilefinish(result:)is reading it, and that itsisFinishedcheck is consistent with the state it acts on.

See

Threading Programming Guidefor more details on thread safety.

Technically, using a lock will block the calling thread, which could be themainthread. I could have exposed this blocking, but I've decided thatCoalescibleOperationshould silently absorb that blocking behaviour, to keep its API simple. Due to this silent blocking, undertaking any significant work insidecompletionHandlershould be avoided or moved onto a different thread.

With our operations now coalescible and thread-safe, it's time to get them queuing and triggering the coalescing.

Queuing those Operations

OperationQueueManager is a wrapper around an OperationQueue instance that detects if an operation with the same identifier already exists on the queue and, if so, coalesces the newer operation into the old one.

final class OperationQueueManager {

// 1

private let queue = OperationQueue()

private let lock = NSLock()

// 2

func enqueue<Value>(operation: CoalescibleOperation<Value>) {

// 3

lock.lock()

defer { lock.unlock() }

if let matchingOperation = matchingCoalescibleOperation(for: operation) {

if matchingOperation.coalesce(operation: operation) {

return

}

}

queue.addOperation(operation)

}

// 4

private func matchingCoalescibleOperation<Value>(for operation: CoalescibleOperation<Value>) -> CoalescibleOperation<Value>? {

let matchingOperation = queue.operations

.lazy

.compactMap { $0 as? CoalescibleOperation<Value> }

.first { $0.identifier == operation.identifier }

return matchingOperation

}

}- The underlying operation queue that manages the execution of coalescible operations.

enqueue(operation:)is the single entry point for adding operations. It first checks if a matching operation is already on the queue. If one exists, the new operation is coalesced into it. If not, the operation is added to the queue as normal. The genericValueparameter is inferred from the operation being passed in, so call sites don't need to specify it.- This lock is used to synchronise access to the underlying operation queue so that only one operation can be added at any given time. This prevents the scenario where two different threads attempt to enqueue the same operation at the same time, resulting in the same operation appearing on the queue twice. Unlike in

CoalescibleOperation, this lock is a non-recursive lock as we don't expect re-entrant calls. matchingCoalescibleOperation(for:)searches the queue for an operation that matches both the type and identifier of the incoming operation. Thelazychain avoids creating intermediate arrays - it casts each operation toCoalescibleOperation<Value>, discards any that don't match the type, and stops at the first one with a matching identifier.

I chose to wrap

OperationQueuerather than subclass it as the wrapper gives a clear separation:OperationQueuemanages execution,OperationQueueManagermanages coalescing.

Now that we have an operation capable of coalescing and a manager that can manage that coalescing, let's build a concrete operation subclass and put it to work.

Fetching Users

In this example, we will build a DataManager to control the fetching of a user's data. The DataManager will act as a coordinator between the operation and the queue manager. Let's start with the operation:

// 1

final class UserFetchOperation: CoalescibleOperation<User> {

// 2

init(completionHandler: @escaping (_ result: Result<User, Error>) -> Void) {

super.init(identifier: "UserFetchOperation",

completionHandler: completionHandler)

}

// 3

override func main() {

let user = // Do work here to fetch and build a `User`

finish(result: .success(user))

}

}- A concrete operation that fetches a

User. By subclassingCoalescibleOperation<User>, it inherits all the coalescing, state management, and callback queue behaviour for free. The only responsibility of this subclass is to define what work to do and what identifier to use. - The initialiser hardcodes the identifier to

"UserFetchOperation". This means all instances ofUserFetchOperationwill coalesce with each other - if one is already on the queue, any subsequent requests will share its result rather than triggering duplicate work. mainis where the actual work happens. It performs the work to build aUser, then callsfinish(result:)to deliver the result to all coalesced callers and transition the operation tofinished.

UserFetchOperation only cares about its own work; all the infrastructure to support concurrent and coalescible operation is inherited - this allows for very clean subclasses.

Now that we have UserFetchOperation, let's pass it along to OperationQueueManager via UserDataManager:

final class UserDataManager {

private let queueManager: OperationQueueManager

// 1

init(queueManager: OperationQueueManager) {

self.queueManager = queueManager

}

// 2

func fetchUser(completionHandler: @escaping (_ result: Result<User, Error>) -> Void) {

let operation = UserFetchOperation(completionHandler: completionHandler)

queueManager.enqueue(operation: operation)

}

}- The queue manager is injected through the initialiser, keeping

UserDataManagerdecoupled from how operations are queued and coalesced. We need to use the same instance ofOperationQueueManagerwhere we want coalescing to occur. By injecting it, we can share the instance, withoutUserDataManagerhaving to care how it is shared. fetchUser(completionHandler:)hides the operation machinery behind a simple closure-based interface - callers don't need to know that their request might be coalesced with an existing one.

To read more on

Dependency Injection, check outLet Dependency Injection Lead You to a Better Design.

And with that, we have the infrastructure to build any number of operations that can be automatically coalesced when needed without the caller needing to know anything about coalescing 🕺.

Moving the Complexity

As our apps grow and more features are added, coalescing lets those features remain independent without paying the price for duplicated work. The complexity of coordinating coalescing is centralised in our infrastructure - freeing each feature to focus on meeting the user's needs, not coordinating their work with each other.

To see the complete working example, visit the repository and clone the project.

Note that the code in the repository is unit tested, so it isn't 100% the same as the code snippets shown in this post. The changes mainly involve a greater use of protocols to allow for test-doubles to be injected into the various types and type erasure to overcome the challenges of working with protocols that have associated types. While not hugely different, I didn't want to present the increased complexity necessary for unit testing to get in the way of the core message in this post.